Effective self-service options are becoming increasingly critical for contact centers, but implementing them well presents unique challenges.

Amazon Lex provides your Amazon Connect contact center with chatbot functionalities such as automatic speech recognition (ASR) and natural language understanding (NLU) capabilities through voice and text channels. The bot takes natural language speech or text input, recognizes the intent behind the input, and fulfills the user’s intent by invoking the appropriate response.

Callers can have diverse accents, pronunciation, and grammar. Combined with background noise, this can make it challenging for speech recognition to accurately understand statements. For example, “I want to track my order” may be misrecognized as “I want to truck my holder.” Failed intents like these frustrate customers who have to repeat themselves, get routed incorrectly, or are escalated to live agents—costing businesses more.

Amazon Bedrock democratizes foundational model (FM) access for developers to effortlessly build and scale generative AI-based applications for the modern contact center. FMs delivered by Amazon Bedrock, such as Amazon Titan and Anthropic Claude, are pretrained on internet-scale datasets that gives them strong NLU capabilities such as sentence classification, question and answer, and enhanced semantic understanding despite speech recognition errors.

In this post, we explore a solution that uses FMs delivered by Amazon Bedrock to enhance intent recognition of Amazon Lex integrated with Amazon Connect, ultimately delivering an improved self-service experience for your customers.

Overview of solution

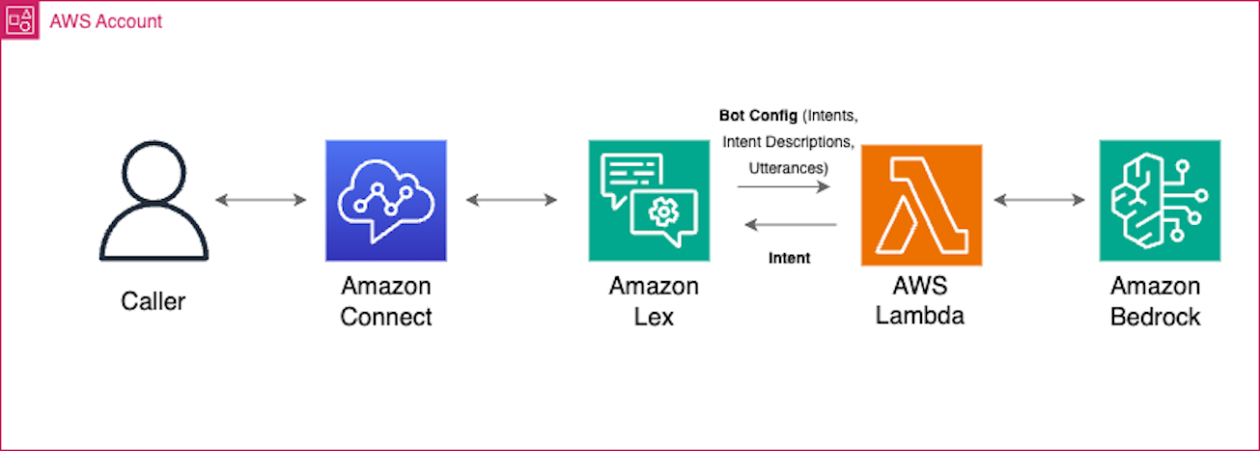

The solution uses Amazon Connect, Amazon Lex , AWS Lambda, and Amazon Bedrock in the following steps:

- An Amazon Connect contact flow integrates with an Amazon Lex bot via the

GetCustomerInputblock. - When the bot fails to recognize the caller’s intent and defaults to the fallback intent, a Lambda function is triggered.

- The Lambda function takes the transcript of the customer utterance and passes it to a foundation model in Amazon Bedrock

- Using its advanced natural language capabilities, the model determines the caller’s intent.

- The Lambda function then directs the bot to route the call to the correct intent for fulfillment.

By using Amazon Bedrock foundation models, the solution enables the Amazon Lex bot to understand intents despite speech recognition errors. This results in smooth routing and fulfillment, preventing escalations to agents and frustrating repetitions for callers.

The following diagram illustrates the solution architecture and workflow.

In the following sections, we look at the key components of the solution in more detail.

Lambda functions and the LangChain Framework

When the Amazon Lex bot invokes the Lambda function, it sends an event message that contains bot information and the transcription of the utterance from the caller. Using this event message, the Lambda function dynamically retrieves the bot’s configured intents, intent description, and intent utterances and builds a prompt using LangChain, which is an open source machine learning (ML) framework that enables developers to integrate large language models (LLMs), data sources, and applications.

An Amazon Bedrock foundation model is then invoked using the prompt and a response is received with the predicted intent and confidence level. If the confidence level is greater than a set threshold, for example 80%, the function returns the identified intent to Amazon Lex with an action to delegate. If the confidence level is below the threshold, it defaults back to the default FallbackIntent and an action to close it.

In-context learning, prompt engineering, and model invocation

We use in-context learning to be able to use a foundation model to accomplish this task. In-context learning is the ability for LLMs to learn the task using just what’s in the prompt without being pre-trained or fine-tuned for the particular task.

In the prompt, we first provide the instruction detailing what needs to be done. Then, the Lambda function dynamically retrieves and injects the Amazon Lex bot’s configured intents, intent descriptions, and intent utterances into the prompt. Finally, we provide it instructions on how to output its thinking and final result.

The following prompt template was tested on text generation models Anthropic Claude Instant v1.2 and Anthropic Claude v2. We use XML tags to better improve the performance of the model. We also add room for the model to think before identifying the final intent to better improve its reasoning for choosing the right intent. The {intent_block} contains the intent IDs, intent descriptions, and intent utterances. The {input} block contains the transcribed utterance from the caller. Three backticks (“`) are added at the end to help the model output a code block more consistently. A <STOP> sequence is added to stop it from generating further.

After the model has been invoked, we receive the following response from the foundation model:

Filter available intents based on contact flow session attributes

When using the solution as part of an Amazon Connect contact flow, you can further enhance the ability of the LLM to identify the correct intent by specifying the session attribute available_intents in the “Get customer input” block with a comma-separated list of intents, as shown in the following screenshot. By doing so, the Lambda function will only include these specified intents as part of the prompt to the LLM, reducing the number of intents that the LLM has to reason through. If the available_intents session attribute is not specified, all intents in the Amazon Lex bot will be used by default.

Lambda function response to Amazon Lex

After the LLM has determined the intent, the Lambda function responds in the specific format required by Amazon Lex to process the response.

If a matching intent is found above the confidence threshold, it returns a dialog action type Delegate to instruct Amazon Lex to use the selected intent and subsequently return the completed intent back to Amazon Connect. The response output is as follows:

If the confidence level is below the threshold or an intent was not recognized, a dialog action type Close is returned to instruct Amazon Lex to close the FallbackIntent, and return the control back to Amazon Connect. The response output is as follows:

The complete source code for this sample is available in GitHub.

Prerequisites

Before you get started, make sure you have the following prerequisites:

Implement the solution

To implement the solution, complete the following steps:

- Clone the repository

- Run the following command to initialize the environment and create an Amazon Elastic Container Registry (Amazon ECR) repository for our Lambda function’s image. Provide the AWS Region and ECR repository name that you would like to create.

- Update the

ParameterValuefields in thescripts/parameters.jsonfile:ParameterKey ("AmazonECRImageUri")– Enter the repository URL from the previous step.ParameterKey ("AmazonConnectName")– Enter a unique name.ParameterKey ("AmazonLexBotName")– Enter a unique name.ParameterKey ("AmazonLexBotAliasName")– The default is “prodversion”; you can change it if needed.ParameterKey ("LoggingLevel")– The default is “INFO”; you can change it if required. Valid values are DEBUG, WARN, and ERROR.ParameterKey ("ModelID")– The default is “anthropic.claude-instant-v1”; you can change it if you need to use a different model.ParameterKey ("AmazonConnectName")– The default is “0.75”; you can change it if you need to update the confidence score.

- Run the command to generate the CloudFormation stack and deploy the resources:

If you don’t want to build the contact flow from scratch in Amazon Connect, you can import the sample flow provided with this repository filelocation: /contactflowsample/samplecontactflow.json.

- Log in to your Amazon Connect instance. The account must be assigned a security profile that includes edit permissions for flows.

- On the Amazon Connect console, in the navigation pane, under Routing, choose Contact flows.

- Create a new flow of the same type as the one you are importing.

- Choose Save and Import flow.

- Select the file to import and choose Import.

When the flow is imported into an existing flow, the name of the existing flow is updated, too.

- Review and update any resolved or unresolved references as necessary.

- To save the imported flow, choose Save. To publish, choose Save and Publish.

- After you upload the contact flow, update the following configurations:

- Update the

GetCustomerInputblocks with the correct Amazon Lex bot name and version. - Under Manage Phone Number, update the number with the contact flow or IVR imported earlier.

- Update the

Verify the configuration

Verify that the Lambda function created with the CloudFormation stack has an IAM role with permissions to retrieve bots and intent information from Amazon Lex (list and read permissions), and appropriate Amazon Bedrock permissions (list and read permissions).

In your Amazon Lex bot, for your configured alias and language, verify that the Lambda function was set up correctly. For the FallBackIntent, confirm that Fulfillmentis set to Active to be able to run the function whenever the FallBackIntent is triggered.

At this point, your Amazon Lex bot will automatically run the Lambda function and the solution should work seamlessly.

Test the solution

Let’s look at a sample intent, description, and utterance configuration in Amazon Lex and see how well the LLM performs with sample inputs that contains typos, grammar mistakes, and even a different language.

The following figure shows screenshots of our example. The left side shows the intent name, its description, and a single-word sample utterance. Without much configuration on Amazon Lex, the LLM is able to predict the correct intent (right side). In this test, we have a simple fulfillment message from the correct intent.

Clean up

To clean up your resources, run the following command to delete the ECR repository and CloudFormation stack:

Conclusion

By using Amazon Lex enhanced with LLMs delivered by Amazon Bedrock, you can improve the intent recognition performance of your bots. This provides a seamless self-service experience for a diverse set of customers, bridging the gap between accents and unique speech characteristics, and ultimately enhancing customer satisfaction.

To dive deeper and learn more about generative AI, check out these additional resources:

For more information on how you can experiment with the generative AI-powered self-service solution, see Deploy self-service question answering with the QnABot on AWS solution powered by Amazon Lex with Amazon Kendra and large language models.

About the Authors

Hamza Nadeem is an Amazon Connect Specialist Solutions Architect at AWS, based in Toronto. He works with customers throughout Canada to modernize their Contact Centers and provide solutions to their unique customer engagement challenges and business requirements. In his spare time, Hamza enjoys traveling, soccer and trying new recipes with his wife.

Hamza Nadeem is an Amazon Connect Specialist Solutions Architect at AWS, based in Toronto. He works with customers throughout Canada to modernize their Contact Centers and provide solutions to their unique customer engagement challenges and business requirements. In his spare time, Hamza enjoys traveling, soccer and trying new recipes with his wife.

Parag Srivastava is a Solutions Architect at Amazon Web Services (AWS), helping enterprise customers with successful cloud adoption and migration. During his professional career, he has been extensively involved in complex digital transformation projects. He is also passionate about building innovative solutions around geospatial aspects of addresses.

Parag Srivastava is a Solutions Architect at Amazon Web Services (AWS), helping enterprise customers with successful cloud adoption and migration. During his professional career, he has been extensively involved in complex digital transformation projects. He is also passionate about building innovative solutions around geospatial aspects of addresses.

Ross Alas is a Solutions Architect at AWS based in Toronto, Canada. He helps customers innovate with AI/ML and Generative AI solutions that leads to real business outcomes. He has worked with a variety of customers from retail, financial services, technology, pharmaceutical, and others. In his spare time, he loves the outdoors and enjoying nature with his family.

Ross Alas is a Solutions Architect at AWS based in Toronto, Canada. He helps customers innovate with AI/ML and Generative AI solutions that leads to real business outcomes. He has worked with a variety of customers from retail, financial services, technology, pharmaceutical, and others. In his spare time, he loves the outdoors and enjoying nature with his family.

Sangeetha Kamatkar is a Solutions Architect at Amazon Web Services (AWS), helping customers with successful cloud adoption and migration. She works with customers to craft highly scalable, flexible, and resilient cloud architectures that address customer business problems. In her spare time, she listens to music, watch movies and enjoy gardening during summer time.

Sangeetha Kamatkar is a Solutions Architect at Amazon Web Services (AWS), helping customers with successful cloud adoption and migration. She works with customers to craft highly scalable, flexible, and resilient cloud architectures that address customer business problems. In her spare time, she listens to music, watch movies and enjoy gardening during summer time.