Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies like AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with security, privacy, and responsible AI.

Common generative AI use cases, including but not limited to chatbots, virtual assistants, conversational search, and agent assistants, use FMs to provide responses. Retrieval Augment Generation (RAG) is a technique to optimize the output of FMs by providing context around the questions for these use cases. Fine-tuning the FM is recommended to further optimize the output to follow the brand and industry voice or vocabulary.

Custom Model Import for Amazon Bedrock, in preview now, allows you to import customized FMs created in other environments, such as Amazon SageMaker, Amazon Elastic Compute Cloud (Amazon EC2) instances, and on premises, into Amazon Bedrock. This post is part of a series that demonstrates various architecture patterns for importing fine-tuned FMs into Amazon Bedrock.

In this post, we provide a step-by-step approach of fine-tuning a Mistral model using SageMaker and import it into Amazon Bedrock using the Custom Import Model feature. We use the OpenOrca dataset to fine-tune the Mistral model and use the SageMaker FMEval library to evaluate the fine-tuned model imported into Amazon Bedrock.

Key Features

Some of the key features of Custom Model Import for Amazon Bedrock are:

- This feature allows you to bring your fine-tuned models and leverage the fully managed serverless capabilities of Amazon Bedrock

- Currently we are supporting Llama 2, Llama 3, Flan, Mistral Model architectures using this feature with a precisions of FP32, FP16 and BF16 with further quantizations coming soon.

- To leverage this feature you can run the import process (covered later in the blog) with your model weights being in Amazon Simple Storage Service (Amazon S3).

- You can even leverage your models created using Amazon SageMaker by referencing the Amazon SageMaker model Amazon Resource Names (ARN) which provides for a seamless integration with SageMaker.

- Amazon Bedrock will automatically scale your model as your traffic pattern increases and when not in use, scale your model down to 0 thus reducing your costs.

Let us dive into a use-case and see how easy it is to use this feature.

Solution overview

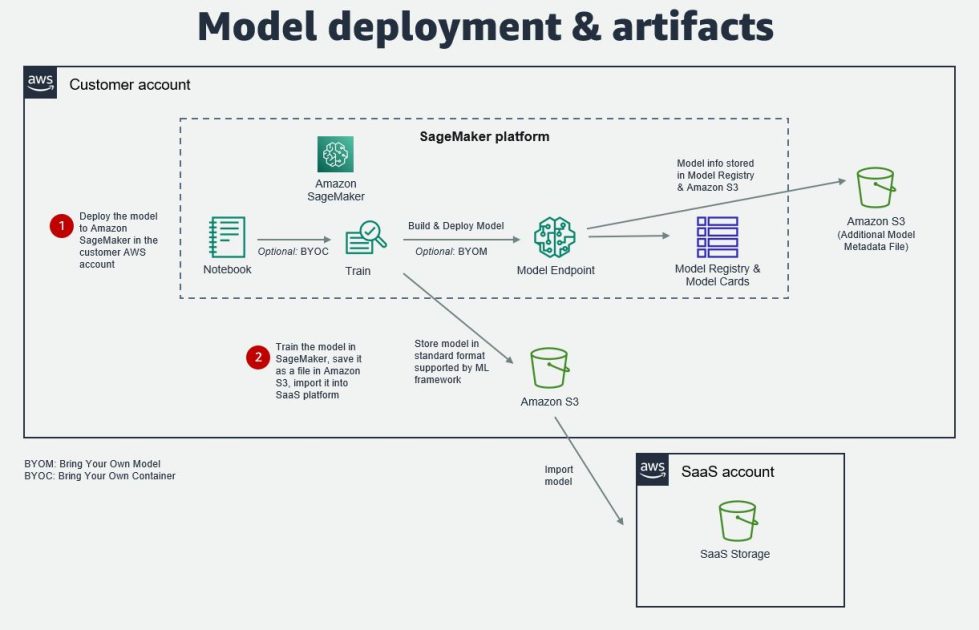

At the time of writing, the Custom Model Import feature in Amazon Bedrock supports models following the architectures and patterns in the following figure.

In this post, we walk through the following high-level steps:

- Fine-tune the model using SageMaker.

- Import the fine-tuned model into Amazon Bedrock.

- Test the imported model.

- Evaluate the imported model using the FMEval library.

The following diagram illustrates the solution architecture.

The process includes the following steps:

- We use a SageMaker training job to fine-tune the model using a SageMaker JupyterLab notebook. This training job reads the dataset from Amazon Simple Storage Service (Amazon S3) and writes the model back into Amazon S3. This model will then be imported into Amazon Bedrock.

- To import the fine-tuned model, you can use the Amazon Bedrock console, the Boto3 library, or APIs.

- An import job orchestrates the process to import the model and make the model available from the customer account.

- The import job copies all the model artifacts from the user’s account into an AWS managed S3 bucket.

- When the import job is complete, the fine-tuned model is made available for invocation from your AWS account.

- We use the SageMaker FMEval library in a SageMaker notebook to evaluate the imported model.

The copied model artifacts will remain in the Amazon Bedrock account until the custom imported model is deleted from Amazon Bedrock. Deleting model artifacts in your AWS account S3 bucket doesn’t delete the model or the related artifacts in the Amazon Bedrock managed account. You can delete an imported model from Amazon Bedrock along with all the copied artifacts using either the Amazon Bedrock console, Boto3 library, or APIs.

Additionally, all data (including the model) remains within the selected AWS Region. The model artifacts are imported into the AWS operated deployment account using a virtual private cloud (VPC) endpoint, and you can encrypt your model data using an AWS Key Management Service (AWS KMS) customer managed key.

In the following sections, we dive deep into each of these steps to deploy, test, and evaluate the model.

Prerequisites

We use Mistral-7B-v0.3 in this post because it uses an extended vocabulary compared to its prior version produced by Mistral AI. This model is straightforward to fine-tune, and Mistral AI has provided example fine-tuned models. We use Mistral for this use case because this model supports a 32,000-token context capacity and is fluent in English, French, Italian, German, Spanish, and coding languages. With the Mixture of Experts (MoE) feature, it can achieve higher accuracy for customer support use cases.

Mistral-7B-v0.3 is a gated model on the Hugging Face model repository. You need to review the terms and conditions and request access to the model by submitting your details.

We use Amazon SageMaker Studio to preprocess the data and fine-tune the Mistral model using a SageMaker training job. To set up SageMaker Studio, refer to Launch Amazon SageMaker Studio. Refer to the SageMaker JupyterLab documentation to set up and launch a JupyterLab notebook. You will submit a SageMaker training job to fine-tune the Mistral model from the SageMaker JupyterLab notebook, which can found on the GitHub repo.

Fine-tune the model using QLoRA

To fine-tune the Mistral model, we apply QLoRA and Parameter-Efficient Fine-Tuning (PEFT) optimization techniques. In the provided notebook, you use the Fully Sharded Data Parallel (FSDP) PyTorch API to perform distributed model tuning. You use supervised fine-tuning (SFT) to fine-tune the Mistral model.

Prepare the dataset

The first step in the fine-tuning process is to prepare and format the dataset. After you transform the dataset into the Mistral Default Instruct format, you upload it as a JSONL file into the S3 bucket used by the SageMaker session, as shown in the following code:

You transform the dataset into Mistral Default Instruct format within the SageMaker training job as instructed in the training script (run_fsdp_qlora.py):

Optimize fine-tuning using QLoRA

You optimize your fine-tuning using QLoRA and with the precision provided as input into the training script as SageMaker training job parameters. QLoRA is an efficient fine-tuning approach that reduces memory usage to fine-tune a 65-billion-parameter model on a single 48 GB GPU, preserving the full 16-bit fine-tuning task performance. In this notebook, you use the bitsandbytes library to set up quantization configurations, as shown in the following code:

You use the LoRA config based on the QLoRA paper and Sebastian Raschka experiment, as shown in the following code. Two key points to consider from the Raschka experiment are that QLoRA offers 33% memory savings at the cost of an 39% increase in runtime, and to make sure LoRA is applied to all layers to maximize model performance.

You use SFTTrainer to fine-tune the Mistral model:

At the time of writing, only merged adapters are supported using the Custom Model Import feature for Amazon Bedrock. Let’s look at how to merge the adapter with the base model next.

Merge the adapters

Adapters are new modules added between layers of a pre-trained network. Creation of these new modules is possible by back-propagating gradients through a frozen, 4-bit quantized pre-trained language model into low-rank adapters in the fine-tuning process. To import the Mistral model into Amazon Bedrock, the adapters need to be merged with the base model and saved in Safetensors format. Use the following code to merge the model adapters and save them in Safetensors format:

To import the Mistral model into Amazon Bedrock, the model needs to be in an uncompressed directory within an S3 bucket accessible by the Amazon Bedrock service role used in the import job.

Import the fine-tuned model into Amazon Bedrock

Now that you have fine-tuned the model, you can import the model into Amazon Bedrock. In this section, we demonstrate how to import the model using the Amazon Bedrock console or the SDK.

Import the model using the Amazon Bedrock console

To import the model using the Amazon Bedrock console, see Import a model with Custom Model Import. You use the Import model page as shown in the following screenshot to import the model from the S3 bucket.

After you successfully import the fine-tuned model, you can see the model listed on the Amazon Bedrock console.

Import the model using the SDK

The AWS Boto3 library supports importing custom models into Amazon Bedrock. You can use the following code to import a fine-tuned model from within the notebook into Amazon Bedrock. This is an asynchronous method.

Test the imported model

Now that you have imported the fine-tuned model into Amazon Bedrock, you can test the model. In this section, we demonstrate how to test the model using the Amazon Bedrock console or the SDK.

Test the model on the Amazon Bedrock console

You can test the imported model using an Amazon Bedrock playground, as illustrated in the following screenshot.

Test the model using the SDK

You can also use the Amazon Bedrock Invoke Model API to run the fine-tuned imported model, as shown in the following code:

The custom Mistral model that you imported using Amazon Bedrock supports temperature, top_p, and max_gen_len parameters when invoking the model for inferencing. The inference parameters top_k, max_seq_len, max_batch_size, and max_new_tokens are not supported for a custom Mistral fine-tuned model.

Evaluate the imported model

Now that you have imported and tested the model, let’s evaluate the imported model using the SageMaker FMEval library. For more details, refer to Evaluate Bedrock Imported Models. To evaluate the question answering task, we use the metrics F1 Score, Exact Match Score, Quasi Exact Match Score, Precision Over Words, and Recall Over Words. The key metrics for the question answering tasks are Exact Match, Quasi-Exact Match, and F1 over words evaluated by comparing the model predicted answers against the ground truth answers. The FMEval library supports out-of-the-box evaluation algorithms for metrics such as accuracy, QA Accuracy, and others detailed in the FMEval documentation. Because you fine-tuned the Mistral model for question answering, you can use the QA Accuracy algorithm, as shown in the following code. The FMEval library supports these metrics for the QA Accuracy algorithm.

You can get the consolidated metrics for the imported model as follows:

Clean up

To delete the imported model from Amazon Bedrock, navigate to the model on the Amazon Bedrock console. On the options menu (three dots), choose Delete.

To delete the SageMaker domain along with the SageMaker JupyterLab space, refer to Delete an Amazon SageMaker domain. You may also want to delete the S3 buckets where the data and model are stored. For instructions, see Deleting a bucket.

Conclusion

In this post, we explained the different aspects of fine-tuning a Mistral model using SageMaker, importing the model into Amazon Bedrock, invoking the model using both an Amazon Bedrock playground and Boto3, and then evaluating the imported model using the FMEval library. You can use this feature to import base FMs or FMs fine-tuned either on premises, on SageMaker, or on Amazon EC2 into Amazon Bedrock and use the models without any heavy lifting in your generative AI applications. Explore the Custom Model Import feature for Amazon Bedrock to deploy FMs fine-tuned for code generation tasks in a secure and scalable manner. Visit our GitHub repository to explore samples prepared for fine-tuning and importing models from various families.

About the Authors

Jay Pillai is a Principal Solutions Architect at Amazon Web Services. In this role, he functions as the Lead Architect, helping partners ideate, build, and launch Partner Solutions. As an Information Technology Leader, Jay specializes in artificial intelligence, generative AI, data integration, business intelligence, and user interface domains. He holds 23 years of extensive experience working with several clients across supply chain, legal technologies, real estate, financial services, insurance, payments, and market research business domains.

Jay Pillai is a Principal Solutions Architect at Amazon Web Services. In this role, he functions as the Lead Architect, helping partners ideate, build, and launch Partner Solutions. As an Information Technology Leader, Jay specializes in artificial intelligence, generative AI, data integration, business intelligence, and user interface domains. He holds 23 years of extensive experience working with several clients across supply chain, legal technologies, real estate, financial services, insurance, payments, and market research business domains.

Rupinder Grewal is a Senior AI/ML Specialist Solutions Architect with AWS. He currently focuses on serving of models and MLOps on Amazon SageMaker. Prior to this role, he worked as a Machine Learning Engineer building and hosting models. Outside of work, he enjoys playing tennis and biking on mountain trails.

Rupinder Grewal is a Senior AI/ML Specialist Solutions Architect with AWS. He currently focuses on serving of models and MLOps on Amazon SageMaker. Prior to this role, he worked as a Machine Learning Engineer building and hosting models. Outside of work, he enjoys playing tennis and biking on mountain trails.

Evandro Franco is a Sr. AI/ML Specialist Solutions Architect at Amazon Web Services. He helps AWS customers overcome business challenges related to AI/ML on top of AWS. He has more than 18 years of experience working with technology, from software development, infrastructure, serverless, to machine learning.

Evandro Franco is a Sr. AI/ML Specialist Solutions Architect at Amazon Web Services. He helps AWS customers overcome business challenges related to AI/ML on top of AWS. He has more than 18 years of experience working with technology, from software development, infrastructure, serverless, to machine learning.

Felipe Lopez is a Senior AI/ML Specialist Solutions Architect at AWS. Prior to joining AWS, Felipe worked with GE Digital and SLB, where he focused on modeling and optimization products for industrial applications.

Felipe Lopez is a Senior AI/ML Specialist Solutions Architect at AWS. Prior to joining AWS, Felipe worked with GE Digital and SLB, where he focused on modeling and optimization products for industrial applications.

Sandeep Singh is a Senior Generative AI Data Scientist at Amazon Web Services, helping businesses innovate with generative AI. He specializes in generative AI, artificial intelligence, machine learning, and system design. He is passionate about developing state-of-the-art AI/ML-powered solutions to solve complex business problems for diverse industries, optimizing efficiency and scalability.

Sandeep Singh is a Senior Generative AI Data Scientist at Amazon Web Services, helping businesses innovate with generative AI. He specializes in generative AI, artificial intelligence, machine learning, and system design. He is passionate about developing state-of-the-art AI/ML-powered solutions to solve complex business problems for diverse industries, optimizing efficiency and scalability.

Ragha Prasad is a Principal Engineer and a founding member of Amazon Bedrock, where he has had the privilege to listen to customer needs first-hand and understands what it takes to build and launch scalable and secure Gen AI products. Prior to Bedrock, he worked on numerous products in Amazon, ranging from devices to Ads to Robotics.

Ragha Prasad is a Principal Engineer and a founding member of Amazon Bedrock, where he has had the privilege to listen to customer needs first-hand and understands what it takes to build and launch scalable and secure Gen AI products. Prior to Bedrock, he worked on numerous products in Amazon, ranging from devices to Ads to Robotics.

Paras Mehra is a Senior Product Manager at AWS. He is focused on helping build Amazon SageMaker Training and Processing. In his spare time, Paras enjoys spending time with his family and road biking around the Bay Area.

Paras Mehra is a Senior Product Manager at AWS. He is focused on helping build Amazon SageMaker Training and Processing. In his spare time, Paras enjoys spending time with his family and road biking around the Bay Area.